How Generative Engine Optimization Works: A Deep Dive for Bloggers (2026)

You Can't Optimize What You Don't Understand

Most GEO advice sounds like this: write conversationally, add FAQs, include statistics, structure your headings as questions. It is all correct. But here is what most GEO guides skip – the reason behind every single one of those tactics.

Why does a conversational tone make AI more likely to cite you? Why do statistics improve visibility by up to 40%? Why does your opening paragraph matter more than everything else combined? Why can a page ranking #15 on Google still get cited by ChatGPT while your #2 result gets ignored?

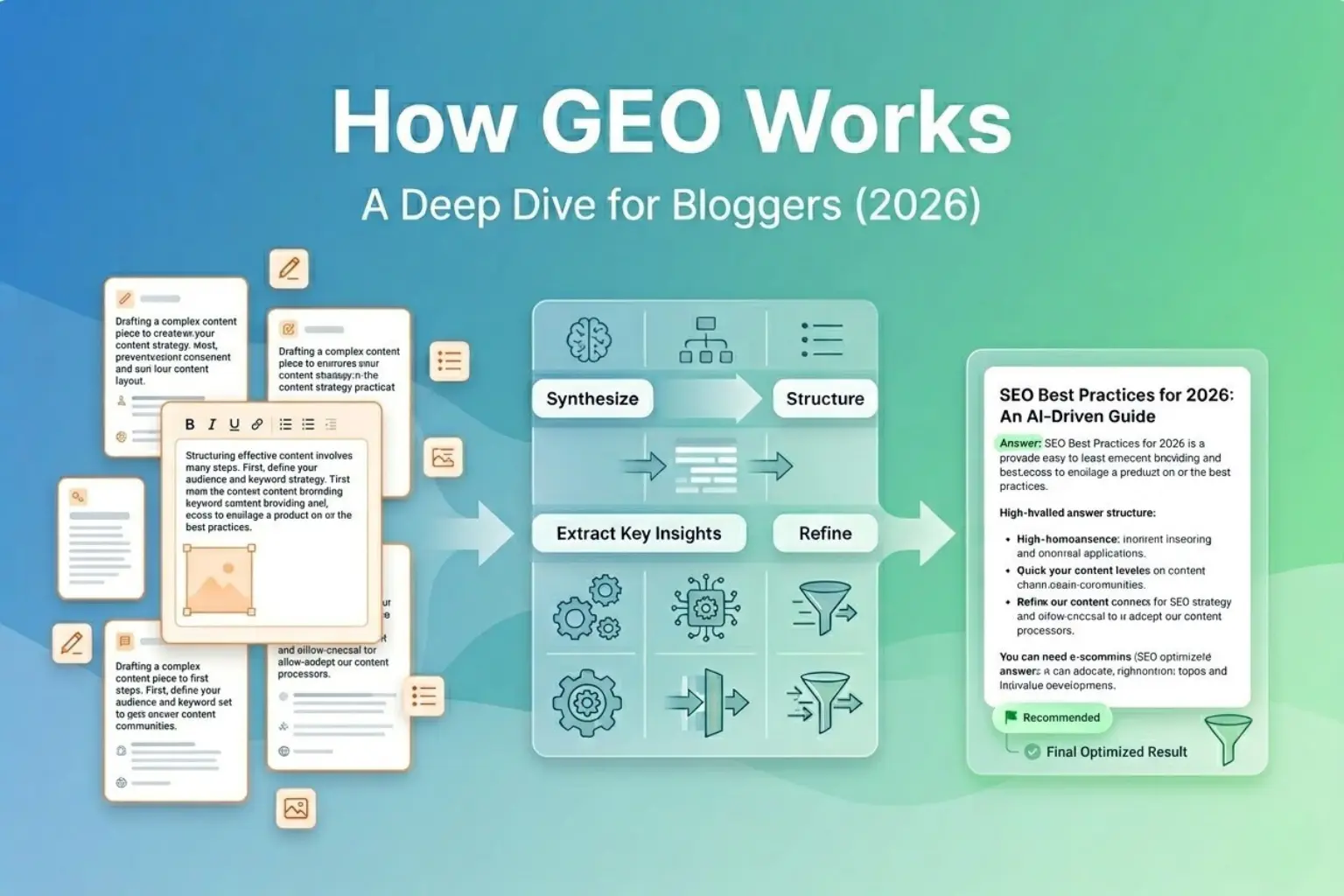

The answers come from understanding exactly how generative AI search systems work – not the surface-level description, but the actual mechanics: the RAG retrieval pipeline, query fan-out, information gain scoring, and the six content signals AI systems evaluate simultaneously before deciding whether to cite your content or skip it entirely.

That is what this guide is for. Think of it as the technical manual behind every GEO tactic you have already heard about. Once you understand the mechanism, optimization becomes deliberate engineering rather than guesswork. And for bloggers building content that needs to earn AI citations in 2026, that difference is everything.

✦ What You Will Understand After This Guide: How the RAG pipeline decides which content to retrieve | Why query fan-out changes your entire keyword strategy | What Information Gain means and why it beats keyword density | The 6 signals AI systems score simultaneously | Why your first 200 words determine 44% of your citations | How to make every section independently extractable |

1. The 3-Step Pipeline: How AI Goes From Query to Citation

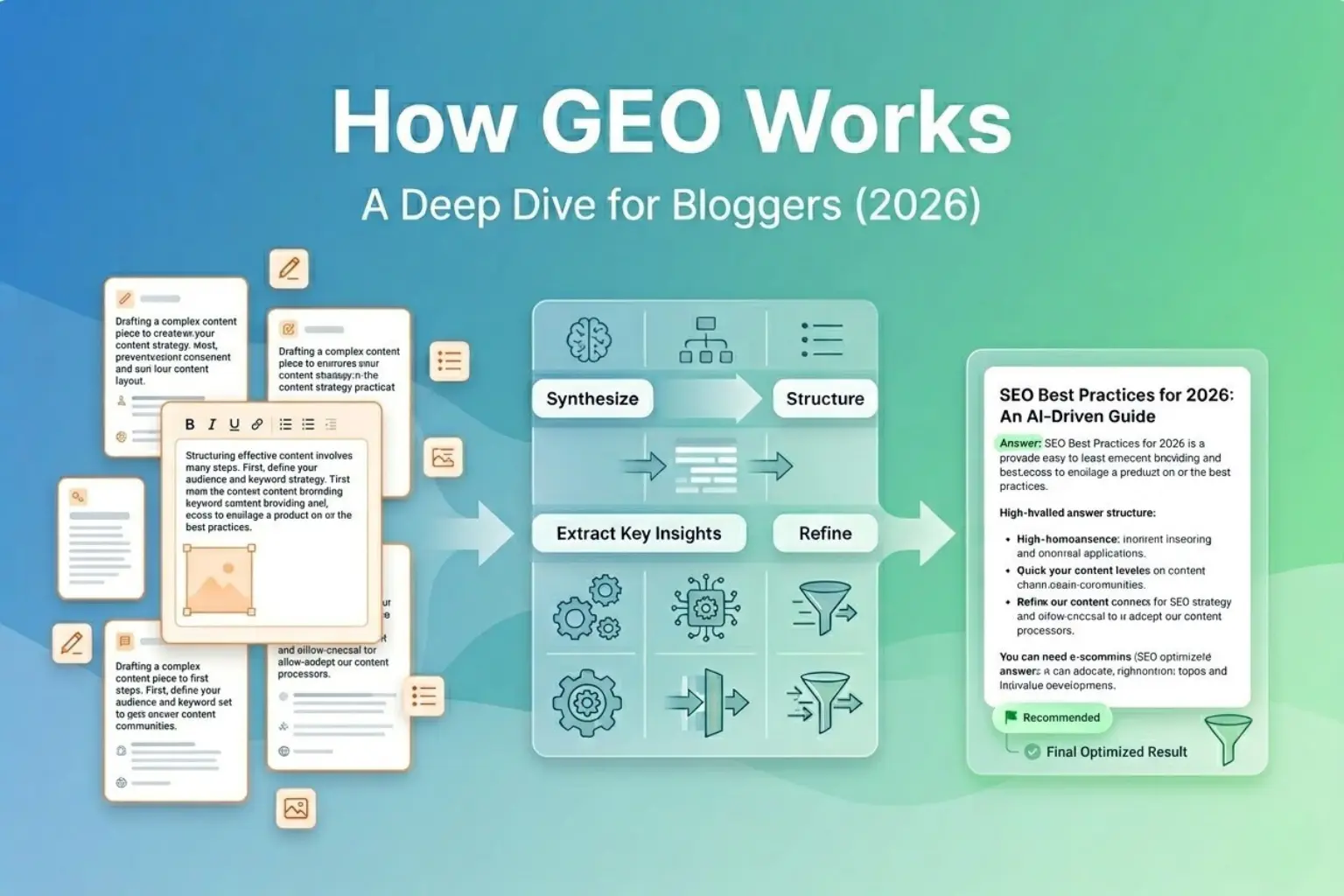

When someone asks ChatGPT or Perplexity a question, they see a fluid, confident answer within seconds. What they do not see is a three-step pipeline that determines exactly which content gets used to build that answer – and which gets permanently passed over.

Every GEO tactic maps back to one of these three stages. If your content fails at any stage, it never makes it to the citation

Query Fan-Out — The AI Breaks the Question Apart

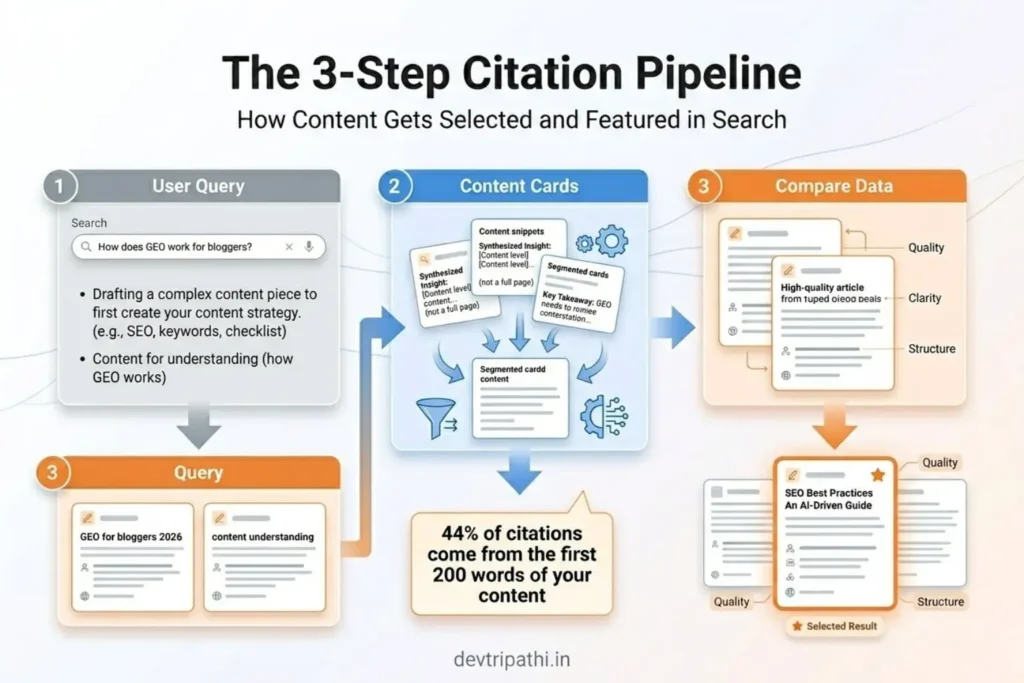

The AI does not search for the full user query. It decomposes the question into three to five targeted sub-queries and searches for each separately. This is query fan-out — and it changes which keywords you need to target entirely.

RAG Retrieval — The AI Searches and Pulls Specific Passages

Using Retrieval-Augmented Generation (RAG), the AI searches the live web and its indexed knowledge base. It does not read your full article — it pulls specific passage chunks semantically similar to each sub-query and feeds those passages to the language model as context.

Real-Time Source Scoring — The AI Compares Your Content

Here is what most bloggers do not realise: the AI does not evaluate your content in isolation. The AI is actively comparing your content to competing sources in real time, deciding which one explains the concept more clearly, provides better evidence, and carries more weight. Your content is scored against every retrieved competitor simultaneously.

2. RAG Architecture: The Engine Behind Every AI Search Answer

Retrieval-Augmented Generation (RAG) is the technical architecture powering ChatGPT Search, Perplexity, Google AI Overviews, and most major AI search systems in 2026. Understanding how RAG works is the single most important thing you can do to improve your GEO strategy – because every content optimization decision flows from it.

Here is the core insight from Frase.io’s RAG analysis: ‘Your job isn’t only to write a great article. Your job is to create passages that retrieval systems can confidently pull and reuse.’ RAG does not read your article the way a human does. It chunks your content into individual passages and evaluates each chunk independently.

How RAG Works Technically – Step by Step

- Semantic conversion: When a user asks a question, the RAG system converts it into a dense vector embedding – a numerical representation of its meaning – not a list of keywords

- Passage retrieval: The system searches its knowledge base (indexed web pages, vector databases) for passages whose embeddings are semantically similar to the query embedding. This is concept matching, not keyword matching

- Retrieval filtering: Systems apply filters based on recency, domain authority, content type, and metadata. Top-K selection – typically 5 to 20 of the most relevant chunks – are selected for context augmentation (Security Boulevard, February 2026)

- LLM synthesis: The retrieved chunks are combined with the original query to create an enriched prompt for the language model, which generates the final answer and cites the most useful sources

What This Means for How You Write

Because RAG operates at the passage level – not the article level – every paragraph in your blog needs to function as a standalone, complete unit of information. Discovered Labs found that ‘RAG architecture determines which content an AI should pull in to answer a query. Write in 40-60 word modular paragraphs that can stand alone contextually. Each chunk should make sense if extracted independently.’

A well-written three-paragraph argument that builds beautifully to a conclusion creates three confusing, incomplete chunks for the RAG system instead of three clean, citable answers. Restructure your writing so each paragraph answers, not builds.

✦ The RAG Self-Test for Every Paragraph: Pull any paragraph out of your article and read it completely alone, without any surrounding context. Does it make complete sense? Does it answer something? If you need the paragraph before or after it to understand what it means, restructure it until it stands alone. This is the most important single habit for GEO-optimized writing. |

76.4% of Highly Cited Pages Source: Updated within the last 30 days – Perplexity citation data, Security Boulevard analysis, 2026 |

→ Related on devtripathi.in: Deep Search and Gemini 2.5: Enhancing Content Visibility in AI-Powered Search

3. Query Fan-Out: Why One Question Becomes Five Searches

Query fan-out is the process by which AI systems decompose a complex user question into multiple smaller sub-queries before searching for any of them. It is one of the most important – and most overlooked – mechanics in GEO.

LLMrefs explains it with a concrete example: when someone asks ChatGPT ‘What is the best VPN for streaming Netflix in Europe?’, the AI does not search that full phrase. It breaks it into three separate searches: ‘best VPN 2026’, ‘VPN Netflix streaming’, and ‘VPN Europe servers’. Your content needs to rank for those component queries, not just the original head term.

A Blogger-Specific Fan-Out Example

Say your target query is: ‘How should a new blogger implement GEO in 2026?’ The AI might decompose this into four sub-queries:

- GEO for bloggers 2026

- How new blogs get AI citations

- GEO implementation checklist beginners

- Content structure for AI search new sites

Your pillar post on GEO may target the main query – but if you have no content addressing those sub-queries individually, you miss out on retrieval for the fan-out searches that feed the AI’s synthesis. This is one of the strongest arguments for building a topical content cluster: each supporting article targets the sub-queries your main topic generates when decomposed.

How to Reverse-Engineer Fan-Out Sub-Queries

- Type your target question into ChatGPT and Perplexity – examine what clarifying follow-up questions they ask. Those are your sub-queries

- Check Google’s ‘People Also Ask’ section for your main keyword – these are common query decompositions

- Use AnswerThePublic to map the question ecosystem around your core topic

- Read Reddit threads on your topic – thread titles and top comments reveal natural query fragments

- Mirror the H2 and H3 headings in top-ranking competitors – these are the proven sub-topic angles Google and AI both reward

→ Related: Optimizing for AI Mode: New SEO Strategies in the Age of Generative Search

4. Information Gain: The Signal That Replaces Keyword Density

Traditional SEO rewarded keyword density – how often your target phrase appeared in your content. GEO rewards something fundamentally different: Information Gain.

Information Gain is the degree to which your content adds something new to what AI systems already know about a topic. ClickCentric defines it clearly: ‘AI Overviews cite content that provides unique facts, specific data, and definitive statements – not content that merely summarises what everyone else already says.’

In practical terms: if your blog post says the same things as the ten articles already ranking for your topic, just rephrased, your Information Gain is near zero. AI systems have already seen that information. There is no reason to cite you. But if you include a specific statistic no other article uses, an original example, a first-hand experience, a comparison table with unique data – you add information the AI cannot get elsewhere. That is what gets cited.

Fact Density – The Practical Measure of Information Gain

12AM Agency introduces a related concept that is highly actionable for bloggers: Fact Density, defined as the ratio of specific, verifiable factual statements to total word count. GEO rewards high fact density – concise, specific, attribute-rich content – over padded long-form prose.

Their contrast is stark: ‘Low fact density: We have been in business for a long time and we really care about our customers. High fact density: Founded in 2015, 12AM Agency has managed $50M in ad spend and increased organic traffic for 200+ SMBs by an average of 45%.’ The second version gives an AI system four specific, citable data points. The first gives zero.

Apply this standard to every paragraph in your blog: can you make any claim more specific? Can you add a number, a timeframe, a source, a comparison? If yes, do it. Vague claims are invisible to AI citation systems. Specific, attributed claims are magnets.

40% Higher Citation Rate Source: Quantitative claims vs qualitative statements – Wellows analysis of 30 million citations |

“GEO rewards authority and answer quality over keyword optimisation. Teams that apply pure SEO tactics to GEO underperform because the ranking signals are fundamentally different. Anonymous content or ‘content team’ bylines are GEO penalties. Every piece of GEO-optimised content needs a named, credentialled author with verifiable external presence.” – Enrich Labs – Generative Engine Optimization: Complete 2026 Guide |

External reference: Princeton University GEO Research – KDD 2024

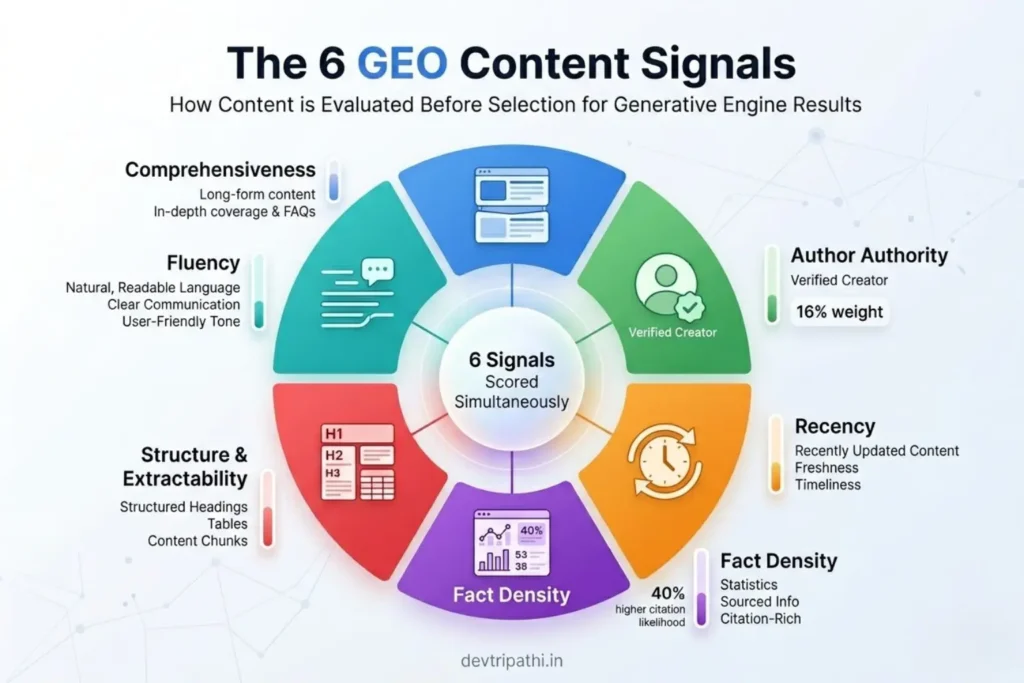

5. The 6 Content Signals AI Systems Score in Real Time

When an AI system retrieves your content and decides whether to cite it, it is not making a single binary judgment. It is scoring your content across six distinct signals simultaneously – and comparing those scores against every competing source retrieved for the same sub-query.

These signals are derived from the Princeton GEO paper (KDD 2024), The HOTH’s campaign data analysis, and Discovered Labs’ research into RAG citation patterns across over 30 million citations.

Signal 1: Comprehensiveness – Does Your Content Anticipate Every Sub-Question?

AI engines prefer content that covers a topic from every angle – addressing the main question and every likely follow-up. Thin pages get skipped. Definitive resources that address edge cases, common misconceptions, and related subtopics consistently earn more citations.

The benchmark from Discovered Labs: long-form content exceeding 2,000 words earns 3x more citations than shorter posts. But length alone is not the answer – comprehensiveness is. The question to ask is: after reading this article, would a user have any remaining unanswered questions about this topic? If yes, add those answers. That depth is what AI systems reward.

Signal 2: Author Authority – Who Wrote It and Can It Be Verified?

Author credentials carry 16% weight in AI citation decisions (BrightEdge, 2025) – up from 8% in 2024. Named, credentialled authors with verifiable external presence (LinkedIn profiles, published work, industry mentions) are cited significantly more than anonymous content or generic ‘Staff Writer’ bylines.

Enrich Labs is direct: ‘Anonymous content or content team bylines are GEO penalties.’ The implication for bloggers is clear – your author bio is not a cosmetic addition. It is a measurable citation signal. A detailed bio naming your credentials, experience, and verifiable external presence directly improves how often AI systems trust and cite your content.

Signal 3: Recency – When Was This Content Last Updated?

AI systems weight recency heavily when selecting sources, particularly for fast-moving topics. Perplexity data shows 76.4% of highly cited pages were updated within 30 days (Security Boulevard, 2026). An article refreshed with a visible ‘Last updated: March 2026’ timestamp consistently beats a stale 2023 article on the same topic – even if the older article has more backlinks and a higher organic ranking.

Build a quarterly content refresh cadence for all GEO-priority pages. When refreshing: add new statistics with current-year sources, revise any outdated examples, add a ‘What changed in 2026’ section to evergreen posts, and update the timestamp visibly. Recency is a citation signal you can control directly.

Signal 4: Fact Density – How Many Specific, Citable Claims Per 100 Words?

As covered in Section 4, fact density is the GEO equivalent of keyword density – except AI systems reward specificity, not repetition. Wellows’ analysis of over 30 million citations found quantitative claims receive 40% higher citation rates than qualitative statements. The Princeton KDD 2024 study found adding statistics improved AI visibility by up to 40%.

Practical rule: for every general claim you make, ask whether you can replace it with a specific one. ‘AI search is growing’ → ‘AI-referred web sessions grew 527% year-over-year in the first five months of 2025 (Previsible, 2025).’ Every upgrade of this kind adds a citable data point and raises your fact density score.

Signal 5: Structure and Extractability – Can AI Pull Each Section Cleanly?

RAG systems pull content at the passage level. Poorly structured content – long unbroken paragraphs, vague headings, answers buried after paragraphs of context – produces ambiguous, unusable chunks. Well-structured content produces clean, citable answers that AI systems can extract confidently.

Key structural signals: clear H1 → H2 → H3 hierarchy where each section covers one distinct topic; question-format headings that mirror user queries (‘How does RAG affect GEO?’ outperforms ‘RAG Overview’); 40-60 word paragraphs; and tables. Discovered Labs found content with tables gets cited 2.5x more often – tables are structurally perfect extraction units for RAG systems.

Schema markup enables AI engines to extract information with 300% higher accuracy compared to unstructured content (StubGroup, 2026). FAQPage schema, Article schema, and HowTo schema all provide machine-readable signals that directly raise extractability scores.

Signal 6: Fluency – Does Your Content Sound Like Trusted Human Expertise?

The Princeton GEO paper identified fluency – natural, authoritative, clearly written prose – as a consistent predictor of AI citation. AI systems are trained on human language and are increasingly effective at distinguishing authentic expertise from keyword-stuffed or AI-generated filler.

The optimal register for GEO is what Frase.io calls ‘accessible expertise’ – writing as you would explain something to a knowledgeable colleague. Professional but not academic, clear but not oversimplified, authoritative but not robotic. Keyword stuffing is actively counterproductive: ‘The AI sees right through it’ (The HOTH, 2026).

6. How AI Systems Compare Your Content Against Competitors

Here is the competitive reality of GEO that changes how you think about content quality: AI systems do not evaluate your content in isolation. They compare it in real time against every source retrieved for the same sub-query.

Traditional search engines rank pages individually – each page is scored on its own merits and placed in an ordered list. Generative AI systems do something fundamentally different. As The HOTH explains: ‘Traditional search engines evaluate pages individually. Generative engines evaluate them against each other. The AI is deciding which source explains the concept more clearly, provides better evidence, and carries more weight.’

Vector Search: Scoring by Meaning, Not Keywords

AI citation scoring uses dense vector search – semantic similarity matching – rather than keyword matching. Your content is converted into numerical embeddings representing meaning, and the AI identifies the passages most semantically similar to the sub-query, regardless of exact phrasing.

This is why keyword stuffing fails spectacularly in GEO. The AI is scoring by conceptual proximity, not word frequency. A passage that says ‘large language models extract citable information from well-structured sources’ scores high for a query about GEO without containing the phrase ‘generative engine optimization’ at all. The AI sees meaning, not surface text.

Paul Teitelman explains the practical implication: ‘If your content uses the right semantic vectors – the same conceptual language as the query – you are retrieved. If you are retrieved, you are cited.’ Use natural synonyms, write in the vocabulary of your topic, and cover the conceptual ground thoroughly without forcing specific phrases.

Citation Displacement – Your Competitor Can Drop Your Citation Rate

Because AI systems compare sources in real time, your GEO performance is partly determined by your competitors’ quality, not just your own. If a competitor publishes a more comprehensive, more data-rich article on your target topic, your citation frequency for that topic can drop – even if you have not changed anything.

StubGroup’s data is blunt: ‘Industry analysis projects that by mid-2026, dominant citation positions will have calcified around early adopters. Delayed implementation creates permanent competitive disadvantage as citation patterns become entrenched.’ The window for establishing citation authority before competitive saturation is genuinely closing.

→ Related: Understanding AI Mode Analytics: Measuring Success in a Generative Search Landscape

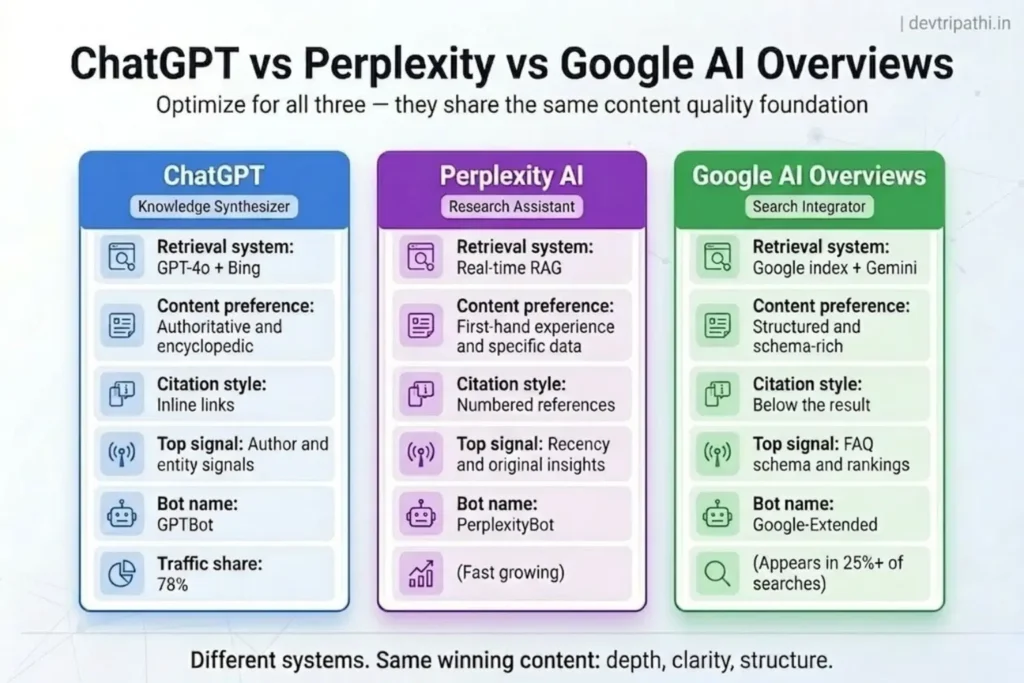

7. Platform Deep Dive: ChatGPT vs Perplexity vs Google AI Overviews

Each major AI search platform has different retrieval architecture, content preferences, and citation biases. Optimizing for all three simultaneously requires understanding what makes each distinct.

ChatGPT – Encyclopedic Authority, Bing-Powered

ChatGPT Search retrieves content via Bing’s index. Wikipedia accounts for 47.9% of ChatGPT’s top citations – revealing a strong preference for authoritative, encyclopedically structured content with clear definitions, organised sections, and verifiable facts. ChatGPT accounts for 78% of all AI referral traffic to websites (Nest Content, 2026).

For ChatGPT optimisation: ensure GPTBot is not blocked in your robots.txt; structure content with clear entity definitions early in each section; build Organization schema on your homepage connecting to LinkedIn and verified profiles; and write with a consistent, authoritative voice that reads like a trusted reference source rather than a blog post.

Perplexity – Real-Time RAG, Rewards First-Hand Experience

Perplexity uses real-time RAG and has the strongest recency bias of the three platforms – 76.4% of its highly cited pages were updated within 30 days. It also rewards first-hand, experience-based content more explicitly than ChatGPT. Frase.io’s analysis is specific: content like ‘After testing 12 project management platforms over 6 months with our 15-person team, we found…’ outperforms generic recommendations because it provides specific numbers, real experience, and verifiable outcomes.

For Perplexity optimisation: refresh your priority pages at least monthly; include first-hand examples and specific metrics wherever possible; and ensure PerplexityBot is not blocked in your robots.txt or Cloudflare settings.

Google AI Overviews – Most Connected to Traditional SEO

Google AI Overviews are most directly linked to organic search performance. Earlier data showed 76% of AI Overview citations came from Google’s top-10 results; Ahrefs’ 2026 analysis shows this has narrowed to 38% – meaning AI Overviews increasingly cite content outside the top 10, creating opportunity for lower-ranking pages with strong GEO signals.

For Google AI Overviews: FAQPage schema is disproportionately influential – it explicitly signals structured Q&A content ready for extraction; Core Web Vitals performance matters; and strong EEAT signals (detailed author bio, About page with Organisation schema) are weighted most heavily by Google’s AI citation system of the three platforms.

→ Related: AI Mode vs Traditional Search: Comparing User Experiences and SEO Outcomes

8. Technical GEO: Making Your Site AI-Crawlable

GEO has a technical layer that most bloggers overlook – and it comes before every content decision. A perfectly optimized article is completely invisible if AI crawlers cannot access your site.

▲ Critical Check – Do This Before Any Content GEO Work: Many WordPress sites running Cloudflare have AI crawlers blocked by default. Cloudflare changed its default configuration in 2024 to block AI bots automatically. Open your Cloudflare dashboard, navigate to AI Crawl Metrics, and verify that GPTBot (OpenAI), Google-Extended (Google), PerplexityBot (Perplexity), and ClaudeBot (Anthropic) are NOT blocked. If they are blocked, all GEO optimisation is wasted – your content is completely invisible to AI search regardless of quality. |

The AI Crawler Checklist for Bloggers

- Check robots.txt – confirm GPTBot, Google-Extended, PerplexityBot, ClaudeBot, and OAI-SearchBot are not disallowed

- Check Cloudflare – navigate to Security → Bots → AI Crawl Metrics and verify no AI bots are blocked

- Check server logs – search for ‘ChatGPT-User’ user agent to confirm ChatGPT is visiting your site

- Confirm server-side rendering – AI crawlers cannot execute JavaScript; content rendered client-side (React, Vue, Next.js without SSR) may be invisible to AI bots even when Googlebot renders it correctly

- Confirm no login walls or paywalls – content locked behind authentication is not crawlable by any AI system

- Check Core Web Vitals – pages with First Contentful Paint under 0.4 seconds average 6.7 AI citations vs 2.1 for slower pages (SE Ranking, 2025)

Schema Markup – Giving AI Systems a Machine-Readable Map

Schema markup enables AI engines to extract information with 300% higher accuracy compared to unstructured content (StubGroup, 2026). It provides explicit, machine-readable signals about what your content contains – what type of content it is, who wrote it, what questions it answers, and how the information is structured.

Priority schema types for bloggers: Article schema (or BlogPosting) for every post, identifying the author, publication date, and topic; FAQPage schema for all FAQ sections – this explicitly signals question-answer pairs ready for extraction; and Person schema in your author bio connecting your credentials to a verified identity.

llms.txt – The Forward-Looking Technical Step

An llms.txt file is an emerging standard similar to robots.txt, designed to help AI systems understand your site structure, identify your most important content, and access efficiently structured summaries. SearchEngineLand recommends adding llms.txt as a technical GEO best practice in 2026 – it reduces AI crawl friction and can improve citation accuracy by giving AI systems an explicit index of your key pages.

→ Related: Personalization in AI Mode: Tailoring Content for Enhanced User Engagement

9. The RAG-Friendly Writing System for Bloggers

Now that you understand the mechanism, here is the writing system that applies every GEO principle covered in this guide. These are not arbitrary style preferences – each rule directly addresses a specific stage of the AI citation pipeline.

Rule 1: BLUF Structure – Answer First, Context Second

AI systems extract 44% of their citations from the opening section of articles. Your answer must come first – not after context, not after a hook, not after background. The HOTH is direct: ‘Write your first paragraph as if it is the only thing the AI will read. Because sometimes it is.’

The BLUF (Bottom Line Up Front) structure: your first 40-60 words directly and completely answer the primary query. Everything that follows – context, nuance, examples, depth – comes after. This mirrors the TL;DR-first content structure that top-performing GEO content consistently uses (Discovered Labs, 2026).

Rule 2: Question-Format H2 and H3 Headings

AI systems use heading hierarchies to understand what each section is about before reading it. A heading that directly mirrors a user query – ‘How Does RAG Decide Which Content to Cite?’ – is semantically aligned with the sub-queries AI systems generate during fan-out. A generic heading like ‘RAG Overview’ is not.

Paul Teitelman’s recommendation: ‘Your H2s and H3s should mirror the exact phrasing of People Also Ask queries.’ Audit your top posts in Google Search Console. Look at the actual queries people use to find each page. Rewrite your headings as questions that mirror those real queries – this is one of the highest-ROI, lowest-effort GEO changes available for existing content.

Rule 3: The 40-60 Word Paragraph Discipline

RAG systems chunk content at the paragraph level. Paragraphs shorter than 40 words often lack sufficient context to function as standalone answer chunks. Paragraphs longer than 80 words contain too much information for clean extraction and produce ambiguous, cut-off passages. Discovered Labs’ research sets the optimal range at 40-60 words per paragraph for RAG extraction.

This does not mean every paragraph must be exactly 40-60 words – it means being conscious of extractability when you write. Each paragraph should be: one complete thought, one direct point, and one that stands alone without the surrounding text.

Rule 4: Tables, Lists, and Structured Comparisons

Content with tables gets cited 2.5x more often than prose-only content (Discovered Labs). LLMrefs’ analysis of 10,000 real-world queries found pages with structured lists had 30-40% higher AI visibility. Tables are structurally ideal RAG extraction units – they present information in clearly labelled, semantically structured formats that AI systems can pull as complete, self-contained data blocks.

Use numbered lists for sequential processes (AI extracts them as step-by-step answers), bullet lists for feature comparisons and key points, and tables for any head-to-head comparison. Every time you find yourself writing a comma-separated list in prose, consider whether a table or bullet list would serve the reader – and the AI – better.

Rule 5: Every Claim Gets a Source Attribution

‘AI search is growing’ is not citable. ‘527% year-over-year growth in AI-referred sessions in the first five months of 2025 (Previsible, 2025)’ is citable. LLMrefs is explicit: ‘Cite statistics with sources. When you include data, name the source. AI systems treat sourced claims as strong authority signals.’ Every specific claim needs: the claim, the number, the source name, and the year.

Rule 6: Comprehensive FAQ Sections (15+ Questions)

FAQ sections are among the most powerful and most underused GEO tools. Only 34% of websites use FAQPage schema – making this a major competitive gap. For every GEO-priority post, add a minimum of 15 questions drawn from People Also Ask data, Reddit threads, Quora answers, and ChatGPT prompts on your topic. Structure each FAQ with an H3 heading for the question and a direct 2-4 sentence answer. Add FAQPage schema markup via Rank Math or Yoast. Content in Q&A format gets extracted by AI systems 2.3x more than prose-only content.

→ Related:

- What Is Generative Engine Optimization (GEO)? Complete 2026 Guide →

- GEO vs SEO: What’s the Difference and Why You Need Both in 2026 →

- Google AI Mode: Transforming Search and SEO Strategies in 2025 →

- Understanding AI Mode Analytics: Measuring GEO Success →

- Optimizing for AI Mode: New SEO Strategies in the Age of Generative Search →

- AI Mode vs Traditional Search: Comparing User Experiences →

- Deep Search and Gemini 2.5: Enhancing Content Visibility →

- Personalization in AI Mode: Tailoring Content for Enhanced Engagement →

- The Ultimate On-Page SEO Checklist for 2025 →

- How to Update Old Content for SEO: A Complete Guide →

11. Frequently Asked Questions About How GEO Works

1. What is RAG and why is it the foundation of GEO?

RAG stands for Retrieval-Augmented Generation. It is the technical architecture powering ChatGPT Search, Perplexity, and Google AI Overviews. Instead of relying only on training data, RAG actively searches the web, retrieves relevant passage chunks from multiple pages, and feeds them to the language model as context for generating answers. Your content must survive RAG retrieval (technically accessible, semantically relevant) before any citation can occur – making RAG the foundation of every GEO decision.

2. What is query fan-out and why does it change keyword targeting?

Query fan-out is the process by which AI systems decompose a complex user question into three to five smaller sub-queries and search for each separately. When someone asks ‘best GEO strategy for new bloggers’, the AI might search for ‘GEO for beginners’, ‘AI citation tips for new blogs’, and ‘GEO implementation checklist’ as separate queries. Your content needs to rank for these component sub-queries – not just the main head term – which is why topical content clusters outperform isolated blog posts in GEO.

3. What is Information Gain and how do I increase it in my blog posts?

Information Gain is the degree to which your content adds something new to what AI systems already know about a topic. You increase it by: including specific statistics that are not in competing articles; adding first-hand examples with real numbers and outcomes; publishing original data or research; making claims more specific (‘527% YoY growth’ vs ‘rapid growth’); and covering angles that competing articles miss. Generic content summarising what everyone else says has near-zero Information Gain and rarely gets cited.

4. Why do tables get cited 2.5x more often by AI systems?

Tables are structurally ideal for RAG extraction because they present information in clearly labelled, semantically structured units. A comparison table gives AI systems a self-contained data block they can extract as a complete unit without losing meaning. The HTML structure of a table (table, thead, tbody, th tags) provides explicit semantic signals about data relationships – making tables one of the cleanest content formats for AI parsing, chunking, and citation.

5. What is the ideal paragraph length for GEO?

The optimal paragraph length for RAG extraction is 40-60 words (Discovered Labs, 2026). Paragraphs under 40 words often lack sufficient context to function as standalone answer chunks. Paragraphs over 80 words contain too much information for clean extraction and produce cut-off passages. This target applies to body paragraphs; opening sentences under headings can be shorter if they function as direct, complete answers.

6. How does AI use headings differently from Google?

Google uses headings primarily as keyword placement and document structure signals. AI systems use headings to understand what each individual section is about before reading it – and match headings semantically to user sub-queries during fan-out. A question-format heading like ‘How Does RAG Select Which Passages to Extract?’ directly maps to the sub-query an AI might generate. A generic heading like ‘RAG Overview’ does not trigger the same semantic match. Converting key headings to question format is one of the highest-ROI, lowest-effort GEO changes available.

7. Why does author authority affect AI citation rates?

AI systems are trained to minimise hallucination and prioritise trustworthy sources. Named, credentialled authors with verifiable external presence (LinkedIn, published work, industry mentions) signal to AI systems that content is written by a genuine domain expert – reducing the risk of citing unreliable information. BrightEdge (2025) measured this at 16% of AI citation decision weight, up from 8% in 2024. Anonymous or ‘content team’ bylines carry no authority signal and are treated as a citation penalty by some AI scoring models.

8. How does page speed affect GEO performance?

Pages with First Contentful Paint under 0.4 seconds average 6.7 AI citations, while slower pages average just 2.1 (SE Ranking, 2025). AI crawlers have tight retrieval time limits – they cannot wait for slow-loading pages to render. Slow sites get partially or incompletely indexed by AI crawlers, reducing the volume of content available for RAG retrieval. Core Web Vitals performance is a baseline technical requirement for competitive GEO, not an optional enhancement.

9. What happens if AI crawlers are blocked on my site?

If GPTBot, PerplexityBot, Google-Extended, or ClaudeBot are blocked in your robots.txt or Cloudflare settings, those AI platforms cannot access any of your pages. Your content is completely invisible to those systems regardless of its quality, structure, or optimisation. This is the single most common and most damaging GEO mistake. Check your robots.txt file and Cloudflare Bot Management settings before any other GEO work – particularly if you use Cloudflare, which changed its default configuration in 2024 to block AI bots automatically.

10. What does ‘citation displacement’ mean for bloggers?

Citation displacement occurs when a competitor publishes higher-quality content on your target topic, causing AI systems to cite their content instead of yours – even if you have not changed anything. Because AI systems actively compare sources in real time, improvements by competitors directly reduce your citation frequency. The protection is a consistent quarterly content refresh cadence: adding new data, updating examples, expanding your FAQ sections, and maintaining clear recency signals. One-time optimisation is not sufficient in the competitive GEO environment of 2026.

11. How many FAQs should each blog post have for GEO?

A minimum of 15 well-structured FAQs per GEO-priority post is recommended. Each FAQ should use an H3 heading for the question and a direct 2-4 sentence answer. Questions should come from real user data: Google’s People Also Ask, Reddit threads, Quora, and ChatGPT prompts on your topic. Add FAQPage schema markup to all FAQ sections. Content in Q&A format gets extracted by AI systems 2.3x more than prose-only content, and only 34% of websites currently use FAQPage schema – making this one of the largest competitive gaps in GEO today.

12. How is GEO measured if AI citations don’t appear in Google Analytics?

GEO measurement uses four parallel tracking methods: (1) Google Search Console – monitor rising impressions for question-based queries without corresponding CTR growth, which indicates AI Overview appearances; (2) GA4 referral tracking – create segments tracking sessions from chatgpt.com, perplexity.ai, and copilot.microsoft.com; (3) Branded search volume in GSC – increasing brand searches indicate growing AI citation awareness; (4) Manual AI citation testing – monthly searches of your 10 priority queries in ChatGPT, Perplexity, and Google AI Overviews to directly track citation appearances.

13. Does GEO require technical SEO knowledge for bloggers?

Basic technical knowledge helps – particularly for checking robots.txt, implementing schema markup via plugins like Rank Math, and verifying Core Web Vitals. Most impactful GEO changes are content-focused and accessible without technical skills: writing BLUF introductions, using question-format headings, keeping paragraphs to 40-60 words, adding 15+ FAQs, and citing sources for every statistic. Technical GEO (AI bot access, schema markup) can be handled through WordPress plugins without coding for most bloggers.

14. What is an llms.txt file and do bloggers need one?

An llms.txt file is an emerging standard that helps AI systems understand your site structure, identify your most important pages, and access efficiently structured content summaries. Similar to robots.txt in concept, it provides AI crawlers with a curated index of your key pages and their descriptions. While not yet universally adopted, SearchEngineLand recommends it as a forward-looking GEO best practice in 2026. For WordPress bloggers, adding a simple llms.txt is a low-effort technical step that can improve AI crawl efficiency and citation accuracy.

15. How long does it take for GEO optimisation to show results?

Early signals – rising impressions in GSC, first appearances in AI Overview results – typically emerge within four to eight weeks of applying GEO best practices to existing content that already ranks. Meaningful, consistent AI citation frequency across multiple platforms develops over three to six months with regular content publishing within a topical cluster. Refreshing existing high-ranking posts with GEO enhancements (FAQs, BLUF structure, statistics) produces faster results than publishing new posts because ranking authority is already established.

Conclusion: Engineer Your Content – Don’t Just Write It

Every GEO tactic makes perfect sense once you understand the mechanism. Your introduction matters so much because RAG extracts 44% of citations from opening sections. Tables earn 2.5x more citations because they are structurally ideal RAG extraction units. Statistics improve visibility by 40% because fact density is a measured scoring signal. Question-format headings outperform generic ones because they match the semantic sub-queries generated during fan-out.

Understanding the mechanism does not make GEO complicated – it makes it deliberate. Instead of applying tactics blindly, you apply them knowing exactly which stage of the citation pipeline they address and why they work.

The practical path for bloggers is clear and achievable: check that AI crawlers are not blocked on your site, apply BLUF structure to your introductions, convert key headings to question format, keep paragraphs in the 40-60 word range, add 15+ FAQs with schema markup, attribute every statistic to its source, and build your author authority visibly. These changes, applied consistently across a topical content cluster, compound into genuine citation authority over months.

The AI systems mediating your audience’s information needs are crawling your site right now. With the right structure, the right signals, and the right content engineering – they will find what they need to cite you.

Devyansh Tripathi

Posts

- What Is EEAT and How It Connects to GEO in 2026 (Blogger’s Guide)

- How to Make Your Blog Appear in Google AI Overviews

- Traditional SEO Is Not Dead – But It Needs GEO to Survive

- What Is an AI Overview and How Does It Affect Your Blog Traffic?

- 10 GEO Terms Every Blogger Must Know in 2026 – Plain English Glossary

Latest News

Text Widget

Empowering brands with insights, strategies, and stories that drive digital growth.